Honey Bee Dead Reckoning

Can honey bee navigation explain human cognition?

I am amazed that so many people conflate Artificial Intelligence (AI)—with its narrow and fixed competence—with natural intelligence and its broad and adaptive competence. AI beats us at chess, it beats us at knowledge retrieval games like Jeopardy!, and it will soon drive cars better than we do. But honey bee cognition remains wider in scope, deeper in competence, more reliable, and more adaptive than any existing AI or robotics application.

You don't believe me? Allow me to give one example of a typical honey bee cognitive task:

A dark nest, packed with vertical wax combs and tens of thousands of sisters, holds a two-week old worker (female) honey bee. She feels vibrations in the comb caused by the dance of an older worker bee—a forager—that just returned with nectar from a productive patch of flowers. The vibrations from this forager's dance inform the young bee of the distance and direction to the nectar source. This young worker bee can also smell the flowers on the dancer.

After a few orientation flights1, the young bee flies off to a destination she has never been to before…potentially miles distant. After finding the advertised flower patch and gathering her fill of nectar or pollen, she makes a beeline—a laser-straight path—back to her nest in a tree in a forest of similar-looking trees without GPS. She avoids obstacles along the way. Oh, and she is legally blind2. She cannot see the hole in her tree leading to her nest until she is within a couple of feet of it.

A brain with fewer than a million neurons makes this every-day miracle possible. Our best AI and robotics research labs and military have nothing that compares with this level of intelligence or autonomy. Instead, their drones depend on 1) Global Positioning Satellites (GPS), 2) power-hungry Nvidia processing units, and 3) active ranging sensors like lidars, radar, and sonar. These power-hungry components (not to mention electric motors) make drones heavy from large batteries, resulting in shorter flight times. Dependence on GPS and active-ranging sensors makes them susceptible to counter-measures. Modern robots and drones (see my posts The Hobbyists That Enabled Ukraine’s Game-Changing Assault and Beyond AI), impressive as they are, are not nearly as autonomous, intelligent, or as energy efficient as a honey bee.

This suggests to me that computer scientists, robotics researchers, and military planners are looking for solutions in the wrong places. They should ask how honey bees do what they do…and then duplicate that in silicon. That's my strategy in a nutshell3.

What follows is a summary of my attempt to replicate how honey bees navigate. Why? Because I suspect that our ability to use symbols, language, and reasoning began long ago with a faculty for navigation (more on this below). In this post, I describe one small part of honey bee navigation that has many names: it is called path integration by biologists, visual odometry by computer scientists, Simultaneous Localization and Mapping (SLAM) by roboticists, and dead reckoning by sailors. Visual odometry by itself is not accurate enough for honey bee navigation because small measurement errors (called drift) accumulate into big errors. Bees fix these errors by fusing other sources of heading information and using visual landmark recognition; I outlined this in my post A Honey Bee's First Orientation Flight.

There are a lot of robotics PhD students implementing visual odometry. My approach is different because it imposes many of the same constraints that evolution has placed on honey bees.

No active ranging. Active ranging sensors include RADAR, LIDARs, other electro-optic and ultrasonic (SONAR) rangers. Bees navigate and avoid obstacles fine without them.

Monocular vision only. No stereo depth perception. Honey bees have five eyes. The two largest are compound eyes that are so close together and have so little visual overlap they function as one wide-angle camera. I use only a single passive camera with a wide-angle lens. Bees infer proximity and time-to-collision from forward and lateral motions called saccades (different from retinal saccades).

Low resolution. Humans can differentiate roughly 66 equally-spaced lines within a single degree of arc (the width of your thumbnail at arm’s length). The legal definition of blindness is 10 lines per degree of arc or less. Honey bees can see only one line per degree of arc, yet they can find their nest from miles away. Honey bees are an existence proof that visual odometry does not require high-resolution sensors.

Computationally simple. Honey bees have fewer than one million neurons in their brains. They use these neurons for a lot of other things besides navigation. I expect to run this algorithm in real-time on an inexpensive Raspberry Pi processor card with processing power to spare. That is possible because the algorithm uses only feed-forward computations: no top-down direction, no iterative or recursive loops. The human brain uses feedback loops for learning and high-level reasoning, but not for most pre-attentive or reactive behaviors.

Optic flow measures distance. Ethological research has shown that honey bees use the movement of visual objects across its field of view (optic flow) to measure distance and avoid obstacles. My algorithm does the same.

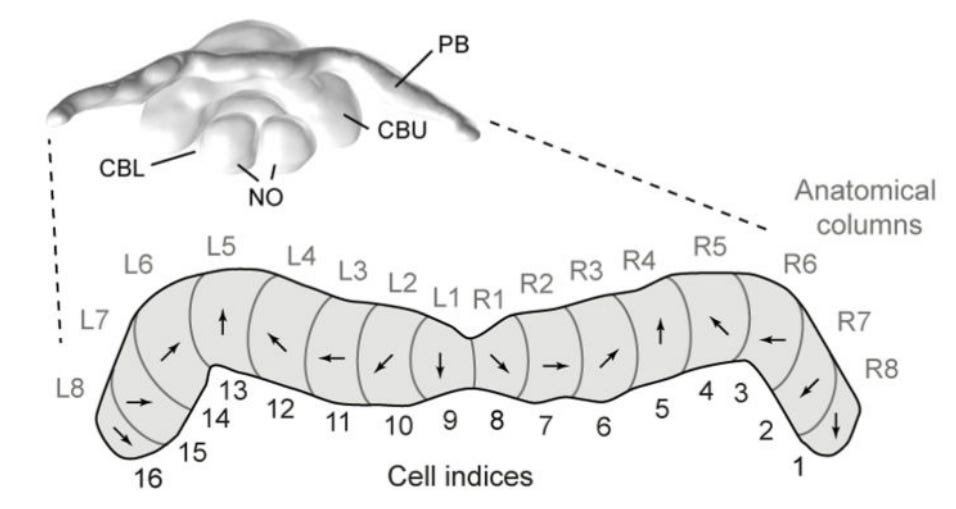

Vision alone is sufficient. Many approaches to visual odometry require inertial sensing, altitude determination, or other non-visual sensing. This algorithm relies on vision alone for odometry and mapping, though combining magnetic compass data and inertial data (gyroscopes and accelerometers) will improve precision and accuracy. Honey bees have both vestibular and magnetic senses and an attractor network to fuse all three heading sources (see protocerebral bridge below).

These constraints make honey bee navigation—and my algorithm—fast and efficient both computationally and energy-wise4. That honey bees have been foraging for over 90 million years suggests that their approach to navigation is robust and worthy of duplicating.

There are some things that traditional visual odometry algorithms calculate that honey bees and this algorithm have no need for.

Bees do not need altitude information. More on this below.

Bees do not need 3D reconstructions. This demands an amount of memory that bees lack and, frankly, an inefficient representation they do not need.

Scale ambiguity is not an issue. The absolute scale of a rendered map is difficult to infer from a monocular camera without external sources. Bees don't care about scale because they don't read maps. What is important is knowing how soon a collision might occur—they can figure that out without knowing their absolute speed.

My comments above refer to what bees need and don't need. However, I believe the same holds true for all animals, including you and me. Do you know how fast you walk? What is the distance between your footsteps? I don't know, but that doesn't prevent me from getting around.

A Demonstration

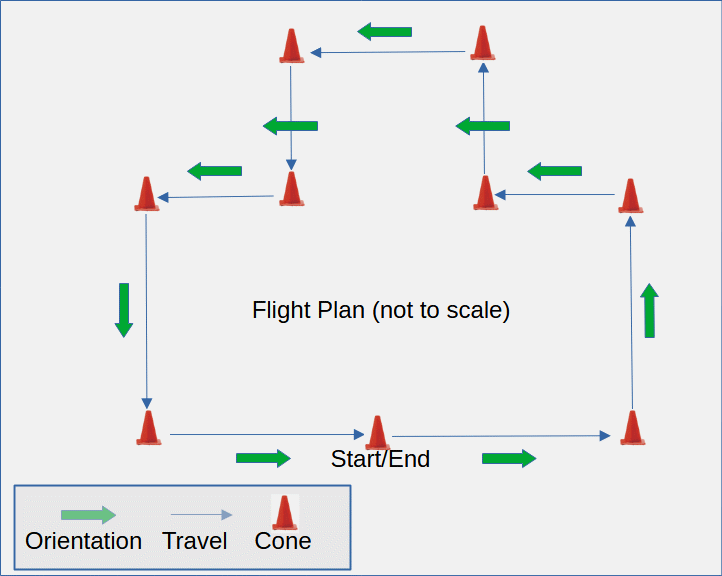

I set up cones in my garden in the shape of a closed loop in order to evaluate my dead reckoning algorithm (see below). The bottom side totals about 50 feet. The flight plan tests multiple dominant modes of movement: forward motion, yaw, crabbing (sideways motion left and right), ascending, descending, and stopped. In a perfect test, the inferred flight path should end up back where it started. Note that this is a flight path with lots of obstacles and changing textures—no simulated landscape here.

I flew a stock DJI Mini 2 drone over this flight path and captured the video. I fed this video into my algorithm and the result is shown below.

The output of the visual odometry algorithm includes three panels:

Video source (upper left). The algorithm overlays a green heading line and a blue horizon line on the input video. Only optic flow is used to infer heading and horizon. Text overlays describe primary motion states (forward travel, crab left, crab right, yaw left, yaw right, ascending, descending, and stopped) and relative heading (0-360).

Optic flow rendered in false color (lower left). The color represents flow direction, and brightness represents rate or speed. The dots represent zero net horizontal flow (all horizontal optic flows diverge from heading) and zero net vertical flow (horizon). Heading and horizon are linear approximations of these data points.

The integrated path (right). The arrow pointer shows the orientation of the drone camera. It is hard to see when it is turning in place: it helps to view it on a monitor.

It's not super accurate is it?

The problem with dead reckoning is that slight errors get compounded over time into substantial errors. I can improve accuracy by integrating compass and inertial data. But it is already accurate enough to reach what is called a catchment area. This is a region where the bee can recognize where it is from landmarks and determine how far off course it is. I describe this in my post First Flight.

Another contributor to inaccuracy is that the horizontal field of view (FOV) of my test flight video is only 72°. It needs to infer motion at a nadir point on the ground that it cannot even see. 72° is a lot less than a 120° FOV fisheye lens or a honey bee with a combined horizontal FOV of 280°. I think my algorithm works pretty well considering that it is practically peering through a port hole.

Also, my piloting skills are not very good. I engage in a lot of unnecessary yawing and crabbing just trying to stay as close as possible to the path marked by cones. I am frankly amazed that the algorithm works as well as it does.

So how does it work?

The short answer is that I exploit multiple regularities in optic flow as I suspect honey bees do. The long answer would not fit in this post, and it would only glaze the eyes of most readers. If you are someone who works in the robotics or computer vision field and are still curious, please DM me.

If there is interest, I may do a video tutorial on optic flow regularities. Very little is published on the analysis optic flow and that which is published is very superficial. Leave a comment and let me know if you would find that interesting.

What is groundbreaking about this work?

All other visual odometry algorithms (that I am aware of) sacrifice power and complexity for the goal of precision and accuracy. This one does not. Its goal is to be low power, simple, and accurate enough that it can navigate via dead reckoning—with obstacles in the way—from one catchment area to the next. My visual odometry algorithm is a promising first step toward a larger goal of understanding how cognition might have evolved. I describe that larger goal below.

Pre-Attentive Spatial Awareness

This visual odometry algorithm is one part of a project I call Pre-Attentive Spatial Awareness or PASA. Pre-attentive means that it is sensor-driven (feed-forward), innate, and unconscious. I developed this algorithm from video captured by a DJI Mini 2 drone. The next step is to capture fisheye video, magnetic compass data, and inertial data from my Big Bird drone. This data will be fused using a continuous attractor network to provide a much more accurate integrated path. I expect this software will run in real-time in Python on a drone-embedded Raspberry Pi computer (with visualization code disabled. See my post Beyond AI).

The second phase of PASA is to integrate visual indexing (see my post Visual Indexing). It will track local stationary objects to avoid collision and provide framing for object recognition. Visual indexing will also detect objects that are partially obscured and non-stationary—from a moving platform no less. Once again, it will accomplish this without GPS, stereopsis, or active ranging.

The third phase of PASA is to integrate landmark-based navigation with catchment regions (see my post First Flight). This will provide the periodic course correction needed because of visual odometry’s drift errors. At this point, PASA will be capable of generating its own allocentric (bird’s eye view) representation of a landscape populated with objects. This knowledge structure will resemble the non-symbolic representation described in How Mental Representations Emerge.

The fourth phase of PASA is to develop algorithms capable of solving navigational problems such as return-to-launch, calculating shortest-path between two points, retrieving next waypoint, awareness of obstacles out-of-sight, and the association of objects (food, water, home, car-keys) with locations.

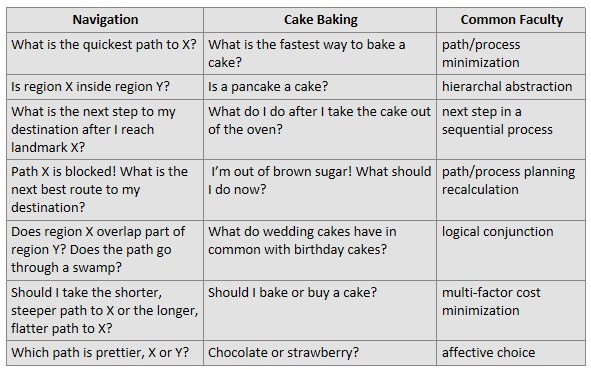

The last phase of PASA is neither pre-attentive nor visual. Its aim is to repurpose navigational knowledge representations and processes for non-navigational cognitive tasks like symbolic representation (where iconic landmarks are replaced by virtual, symbolic landmarks), logical reasoning (goal-driven conceptual 'navigation'), and innovation (allegorical processing)... the same sort of cognitive faculties described in my post What's So Great About Honey Bees? This last phase is driven by an intuition that symbolic representations, language, reasoning, innovation, and consciousness are all products of a repurposed or exapted navigational faculty in humans and other animals.

Questions

I believe the goal of science is not to provide answers but to generate better questions. An 'answer' is emotionally-satisfying in its closure. But closure is an empty promise, and few truths are complete or immutable. Only more questions can provide a deeper understanding. My hobby research has generated a lot of questions. Some of them include:

Do honey bees sense altitude? My odometry algorithm measures distance in terms of inferred-nadir optic flow. However, without knowing one's altitude above ground, optic flow cannot give you a consistent measure of distance. A fixed forward flight speed can cause fast optic flow if close to the ground or slow optic flow if high above the ground.

Is knowledge of altitude even necessary? Sometimes it helps to think like a bee. The technique honey bees use to land5 suggests that knowledge of altitude is unnecessary. By maintaining a constant forward optic flow, honey bees may fly faster as they gain elevation above ground. Setting a limit on energy expenditure would also set a maximum height above visual ground. Visual ground could be fields, tree tops, or the roofs of buildings.

How do imperfect and unreliable sub-systems combine to deliver reliability and robustness? Honey bees have been around for 90 million years longer than we have. They must be doing something right. I suspect redundant systems fused together with attractor networks contribute to this reliability. Bees combine heading information from vision, vestibular senses, and magnetic sensing into a single attractor network6. Attractor networks are truly nonlinear connectionist networks that function like Extended Kalman filters7. The human hippocampus is flush with attractor networks.

How might navigation become exapted to solve non-navigational problems? All problems—navigation and otherwise—begin with a goal. How might the cognitive faculties originally used to navigate apply to baking a cake? See my post Beyond AI and the illustration below.

This post started out describing a honey bee navigational task that would challenge any human…much less one 2 weeks old and having poor eyesight. I then demonstrated a visual odometry algorithm that I created based on honey bee neuroscience and constraints that nature imposes on honey bees. This visual odometry algorithm simulates one part of a larger array of pre-attentive visual processes described elsewhere in Intelligence Evolved. My goal is not a system that provides maps or travel directions, but a system that can learn and reason about where it has been. From there, I believe it will be a short step to navigating a world of transcendental concepts. Stay tuned.

This research project is how I spend my free time. I am entirely self-funded at a rate much less than golfing or boating. If you find this interesting or important or both, perhaps you can support me with an annual grant paid subscription.

Better yet, if you like Intelligence Evolved, please recommend it to your friends. I have a book to publish, but I can't do that until I have a platform with a readership of some size. Please help me spread the word to like-minded readers.

Footnotes

Facts about honey bees’ first flights:

Age at first flight: 3-14 days, mean 6.2

# flights before foraging: 1-17, mean 5.6

Mean age of onset of pollen foraging: 14 days

19% of foragers perform 50% of total trips—this minority accounts for a disproportionate production of pollen and nectar

Klein, S., Pasquaretta, C., He, X.J. et al. Honey bees increase their foraging performance and frequency of pollen trips through experience. Sci Rep 9, 6778 (2019).

For more on honey bee navigation, see my post First Flight.

If you hold your thumb at arm's length, the nail on your thumb represents roughly one degree of arc. If you were to draw equally-spaced, parallel lines on your thumb, you could see each line until that number reached 66 (0.015 degrees of arc). That represents the visual acuity of an average human. Ten lines (0.1 degrees) represent the legal definition of blindness. The entire thumbnail (1 degree arc) represents the visual acuity of a honey bee!

The goal here is not to build robots but to understand how natural intelligence works. You could call this an exercise in biomimetic computer vision, computational neuroscience or even computational ethology. By simulating natural processes on computers and airborne drones, we can confirm or refute whether a hypothesis is even workable.

This demo was executed on a 13th Gen Intel Core i7-13700 Windows 11 machine running Python 3.12.1 (single core). I used Clipchamp to screen scrape my desktop as I processed the video...so you are seeing the results of processing roughly 5400 frames of video in real time. The demo (without screen scraping) uses less than 25% of the CPU. The video input is a 3-minute, 1920×1080 resolution, 30fps MP4 video from a DJI Mini 2 drone. I can pull out the code that produces these visualizations (these 3 panels) when I need to run the code in an actual application with more modest computing resources. The code uses the NumPy library, but no neural networks were harmed (or used) in this algorithm.

Srinivasan, M & Zhang, S & Chahl, J & Barth, E & Venkatesh, Srimathi. (2000). How honeybees make grazing landings on flat surfaces. Biological cybernetics. 83. 171-83. 10.1007/s004220000162.

From the paper’s abstract:

“Freely flying bees were filmed as they landed on a flat, horizontal surface, to investigate the underlying visuomotor control strategies. The results reveal that 1) landing bees approach the surface at a relatively shallow descent angle; 2) they tend to hold the angular velocity of the image of the surface constant as they approach it; and 3) the instantaneous speed of descent is proportional to the instantaneous forward speed. These characteristics reflect a surprisingly simple and effective strategy for achieving a smooth landing, by which the forward and descent speeds are automatically reduced as the surface is approached and are both close to zero at touchdown. No explicit knowledge of flight speed or height above the ground is necessary.”

Stone, T., Webb, B., Adden, A., Weddig, N. B., Honkanen, A., Templin, R., Wcislo, W. T., Scimeca, L., Warrant, E., & Heinze, S. (2017). An Anatomically Constrained Model for Path Integration in the Bee Brain. Current Biology, 27(20), 3069–3085.e11. https://doi.org/10.1016/j.cub.2017.08.052.

A Kalman filter is a sophisticated kind of linear filter used in missiles, drones, moon landers, self-landing rocket boosters, and Segway scooters. A non-linear approximation is called an Extended Kalman Filter. I learned about Kalman filters as an electrical engineering student in control theory class.

Regarding knowing the altitude in order to be able to estimate speed using optical flow

While absolute ground speed estimation is tricky because there-s an unknown wind speed component to it, it is easy to estimate speed variation, e.g. when the aircraft accelerates or decelerates, variance of speed over a short period (a few seconds) can be computed from IMU, sensed from airspeed sensor or other means e.g. inferred from propeller rpm, craft attitude and air density (temperature, pressure) measured during some initial calibration flights.

Then if the robot knows its speed increased with e.g, 2m/s between Ta and Tb it has (Va, Oa) and (Vb, Ob), where Oa, Ob is optical flow measured "magnitude" at points a and b, Va is unknown velocity at Ta and Vb = Va+2m/s. While the ratio Ob/Oa can also be measured, then Va can be inferred, simply because in horizontal flight optical flow measured vertically under the craft is proportional to ground speed.

All this assumes the craft also maintains fixed altitude or uses some means to measure variance in altitude between Ta and Tb (IMU, altimeter) and account for it too.

I recall that in sailing when one's own boat is in motion and another boat is seen as "staying" at the same horizon angle relative to your boat's moving direction, then you are on a collision course. Even when the other boat is stationary, this simple visual clue means your boat is for some reason spiraling towards it and will eventually collide. The exception is when both ships have straight parallel paths with exact same speed but that's quite improbable. I think this could be a simple landing strategy to use when the "insect" is able to visually track the desired landing spot - somehow keep the optical flow of the target at zero or close.